The Algorithmic Jury: Why AI Detectors are Erasing Objective Reality

The Impossible Crowd: A Digital Firestorm

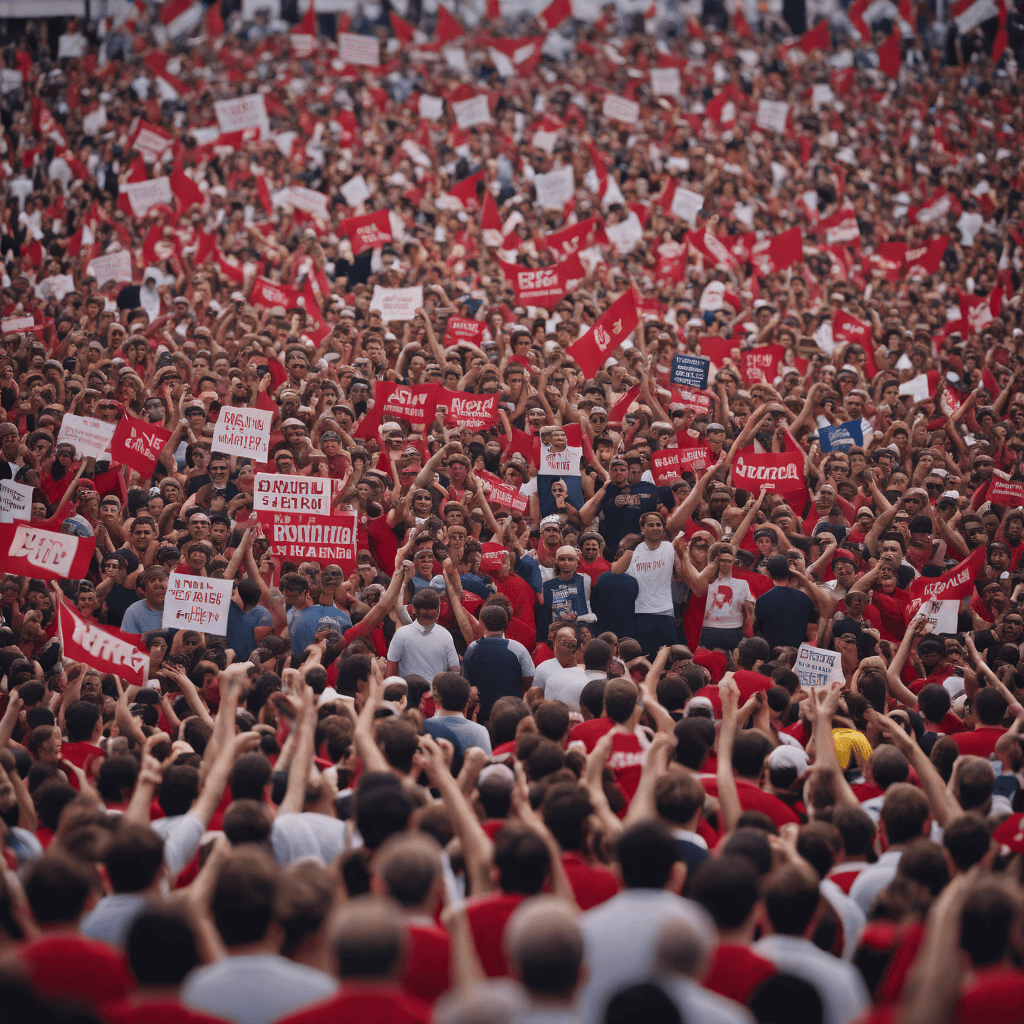

In the biting chill of February 2026, a single high-resolution image of a political rally in Fukuoka began its descent into the digital abyss. The photograph, capturing a sea of thousands gathered for a coalition speech, should have been a standard piece of political record. Instead, it became a primary casualty of the "post-proof" era. Within hours of being posted, automated detection bots—now the ubiquitous, self-appointed gatekeepers of social media—stamped the image with a "98% Synthetic" warning. This immediate technical verdict triggered a digital firestorm where authentic political participation was dismissed as an algorithmic hallucination, proving that in the current landscape, the machine’s judgment often outweighs the eye’s witness.

The speed of this rejection highlights a widening gap between AI generation and the ability to verify it in real-world settings. According to the NTIRE 2026 Challenge on Robust AI-Generated Image Detection in the Wild, detecting synthetic artifacts in "wild" conditions—such as compressed social media files or crowded urban scenes—remains a persistent difficulty. The challenge framework notes that detection technology consistently lags approximately six months behind the newest generative models, creating a window where errors are inevitable. For David Chen (pseudonym), a digital forensics analyst who monitored the social media backlash, the irony was palpable: the tools meant to protect truth were being used to erase it. Chen observes that the sheer density of a human crowd often generates "statistical noise" that automated systems frequently misidentify as generative artifacts.

The Architecture of Failure: Why the 'Wild' Breaks the Machine

The failure of AI detection in the "wild" is not a mere technical glitch; it is a fundamental architectural limitation of current digital forensics that threatens the integrity of the US media landscape. As detection frameworks currently lag roughly six months behind the newest generation of GAN and Diffusion models, the "wild" becomes a blind spot for algorithmic oversight. This technical gap suggests that the current reliance on automated arbiters is built on a foundation of rapid obsolescence, leaving public figures and citizens alike vulnerable to false accusations of fabrication.

Dr. Hany Farid, a Professor of Computer Science and Digital Forensics at UC Berkeley, argues that the statistical noise of thousands of faces can mimic the synthetic artifacts of a Generative Adversarial Network (GAN). When a camera captures a protest or a political rally, the sheer volume of overlapping textures and blurred features creates the very "noise" that AI detectors are trained to identify as signs of manipulation. This results in the "liar's dividend," a dangerous secondary effect where real evidence is dismissed as AI-generated simply because it is too complex for the machine to parse, allowing bad actors to hand-wave away authentic documentation of their actions.

While raw detection accuracy remains at 92.4% according to the SynthID University Research Consortium, that remaining margin of error is where political reality is currently being contested. In a deregulated market where speed-to-market is the primary metric of success, social platforms often deploy these unrefined detection tools to mitigate liability, regardless of their accuracy in complex scenarios. This creates a fundamental tension between the administration's goal of unchecked innovation and the individual's right to digital existence.

Hegemony Over Safety: The Deregulatory Trap

This technical fallibility is not an isolated glitch but a systemic risk exacerbated by the current regulatory pivot in Washington. The Trump administration’s recent Executive Order on Deregulating AI Innovation emphasizes an "America First" policy designed to accelerate technological growth by minimizing regulatory burdens. By revoking previous safety reporting mandates established in 2023, the administration aims for global AI hegemony, yet this hands-off approach has left the public square vulnerable to algorithmic errors.

The White House policy signals a decisive pivot toward technological growth, explicitly prioritizing US leadership against global competitors like China. By preempting state-level safety mandates that could hinder speed-to-market, the administration has cleared the path for a flood of new generative models without a corresponding investment in public-facing verification infrastructure. This minimal regulatory burden may secure market dominance, but it leaves the individual citizen standing on a foundation of shifting sand where the tools of truth-seeking are secondary to the goals of national momentum.

For individuals like Michael Johnson (pseudonym), a political communications aide whose authentic rally footage was recently flagged as a deepfake by a major social media platform, the consequences are immediate. Johnson’s experience highlights the "post-proof" landscape where a machine's 7.6% error rate—as cited by the SynthID/University Research Consortium—can effectively erase a person's participation in the political process. Even with a raw accuracy rate of 92.4%, the remaining margin of error represents thousands of authentic moments that are now vulnerable to algorithmic delegitimization.

The Liar’s Dividend: Weaponizing the Shadow of Doubt

The existence of sophisticated AI generation has birthed a corrosive psychological alibi for the powerful: the ability to dismiss any inconvenient reality as a digital fabrication. As the Trump administration accelerates the deregulation of domestic AI development, this "liar’s dividend" has moved from a theoretical concern to a central strategy in American political discourse. In a high-stakes political environment, the mere existence of generative tools provides a permanent shadow of doubt that bad actors can cast over legitimate investigations, effectively erasing accountability through the claim of "AI interference."

Consider the case of David Chen, a local activist who captured genuine footage of an infrastructure failure during recent winter storms. Because the statistical noise of crowded scenes can mimic synthetic artifacts, Chen’s authentic footage was suppressed by an automated platform filter. The label effectively silenced his report, as the algorithmic judgment superseded his own testimony. When the lens of technology can no longer distinguish between a citizen’s witness and a computer’s whisper, the first casualty is the citizen's ability to prove their presence in the democratic process.

The Return of the Witness: Hybrid Verification as the New Standard

The traditional role of the photograph as an objective "visual receipt" has fundamentally collapsed. This shift demands a radical return to human-centric journalistic verification, as the reliance on automated "truth-meters" proves increasingly brittle. Metadata analysis and on-the-ground corroboration must now take precedence over the "black box" verdict of a detection algorithm. We are entering an era where the image can no longer stand alone; it requires a provenance of human testimony and context-aware reporting to survive the algorithmic filter.

For James Carter (pseudonym), a digital forensics analyst based in Washington D.C., the shift back to traditional fundamentals is not a regression, but a survival strategy. He argues that the recovery of physical memory cards and the testimony of multiple independent witnesses are the only viable antidotes to a landscape where algorithms are both the crime and the incompetent judge. The loss of the visual receipt signifies more than just a technological shift; it marks the end of an era where seeing was believing, forcing the reconstruction of a shared reality on the much more difficult terrain of verified intent.

If the current trend of deregulation continues, the burden of proof will shift from the creator of the fake to the witness of the truth, forever altering the fabric of American constitutional rights to expression and assembly. If the definition of reality is delegated to an algorithm that admits a margin of error, history risks becoming little more than the most convincing hallucination.

Sources & References

NTIRE 2026 Challenge on Robust AI-Generated Image Detection in the Wild

CVPR Workshop on New Trends in Image Restoration and Enhancement (NTIRE) • Accessed 2026-02-06

Technical framework highlighting the persistent difficulty of detecting AI images in 'wild' (real-world) conditions, such as compressed social media photos or crowded street scenes, due to evolving GAN and Diffusion model artifacts.

View OriginalExecutive Order on Deregulating AI Innovation (Draft/2026 Context)

U.S. White House • Accessed 2026-02-06

The Trump 2.0 administration's pivot toward 'America First' AI policies, emphasizing deregulation to accelerate technological growth while preempting state-level safety mandates that could hinder speed-to-market.

View OriginalAI Image Detection Accuracy (Raw): 92.4%

SynthID/University Research Consortium • Accessed 2026-02-06

AI Image Detection Accuracy (Raw) recorded at 92.4% (2026)

View OriginalDr. Hany Farid, Professor of Computer Science and Digital Forensics

UC Berkeley • Accessed 2026-02-06

The danger of AI detection is not just missing a fake, but the 'liar's dividend' where real evidence is dismissed as AI-generated. Crowds are particularly hard because the statistical noise of thousands of faces can mimic the synthetic artifacts of a GAN.

View OriginalWhat do you think of this article?